About this course

Welcome to the course notes for STAT 508: Applied Data Mining and Statistical Learning. These notes are designed and developed by Penn State’s Department of Statistics and offered as open educational resources. These notes are free to use under Creative Commons license CC BY-NC 4.0.

This course is part of the Online Master of Applied Statistics program offered by Penn State’s World Campus.

Currently enrolled?

If you are a current student in this course, please see Canvas for your syllabus, assignments, lesson videos, and communication from your instructor.

Course Structure

STAT 508 is structured to maximize learning through a ‘hands-on’ approach to data mining and statistical learning. Each lesson features instructional videos that cover key statistical concepts, complemented by guided notes to aid understanding and retention. Practical application is emphasized with R code examples and datasets provided for hands-on learning and active engagement. Weekly assignments are assigned to apply the week’s learning, while each unit culminates in a substantial project requiring integration of multiple skills and concepts learned throughout the unit. This structured progression ensures students not only grasp theoretical foundations but also develop practical statistical analysis skills using R, enhancing their ability to solve real-world problems and interpret data effectively.

Text and Materials

[ISLR2] James G, Witten D, Hastie T, Tibshirani R (2021). An Introduction to Statistical Learning with Applications in R, 2nd ed. Springer: New York, NY. (Due to errata as well as differences in R versions, try to get the latest printing you can find.)

You can download a pdf of the book from the [textbook’s website][https://www.statlearning.com/].

Technology

Throughout the course, students will actively apply statistical concepts using R through guided exercises, assignments, and unit projects.

- R can be downloaded from: https://cran.r-project.org

- RStudio can be downloaded from: https://posit.co/download/rstudio-desktop/

Course Content

Lesson 1: Introduction to Statistical Learning, EDA, and Unsupervised Learning

Objectives

Upon completion of this lesson, you should be able to:

- Explain the difference between supervised and unsupervised learning.

- Explain the difference between regression and classification.

- Explain the difference between inference and prediction.

- Gain proficiency in R programming by understanding its distinction from RStudio, using built-in functions, installing packages and loading libraries, and loading datasets from various sources into R.

- Perform an exploratory data analysis, including the calculation of summary statistics and data visualization, to gain insights from the data.

- Perform data wrangling tasks such as subsetting a dataset and creating new variables.

Lesson 2: Principal Components Analysis

Objectives

Upon completion of this lesson, you should be able to:

- Describe how principal component analysis (PCA) fits into the larger framework of statistical learning.

- Perform PCA using statistical software, including analyzing the proportion of variance explained by each principal component.

- Use PCA to reduce the dimensionality of high dimensional data, visualize the result, and extract insights.

Lesson 3: Clustering

Objectives

Upon completion of this lesson, you should be able to:

- Describe how clustering fits into the larger framework of statistical learning.

- Explain a K-means algorithm and potential implementation issues, such as sensitivity to the initialization and locally optimal solutions.

- Perform K-means clustering using statistical software, including choosing a value for K.

- Describe common types of linkage used in hierarchical clustering.

- Interpret a dendrogram.

- Perform hierarchical clustering using statistical software, including the creation of a dendrogram for visualizing the results.

- Explore the subgroups discovered in a clustering problem and extract meaningful insights

Lesson 4: Linear Regression

Objectives

Upon completion of this lesson, you should be able to:

- Describe how linear regression fits into the larger framework of statistical learning.

- Explain how parameters are estimated using the least-squares criterion.

- Fit a linear regression model using statistical software, including situations involving polynomial terms, categorical predictions, and/or interaction terms

- Interpret the output of a linear regression model, including coefficient estimates and quality of fit metrics.

- Explain why cross validation techniques, such as a training/validation split, are used.

- Assess the predictive ability of the linear regression model on new data using measures such as the root mean square error.

Lesson 5: Feature Selection and Regularization

Objectives

Upon completion of this lesson, you should be able to:

- Identify the best subset of predictors for a linear regression model using subset selection methods, such as best subsets or stepwise regression, using statistical software.

- Compare competing linear regression models using a variety of approaches that estimate test set error directly, such as cross validation techniques, or indirectly, such as AIC.

- Explain the concept of bias-variance tradeoff.

- Compare and contrast the methods of ridge regression and LASSO.

- Apply shrinkage/regularization methods for estimating parameters using statistical software, including selecting a value for the tuning parameter using cross validation.

- Interpret the results of LASSO in the context of feature selection

Lesson 6: Dimension Reduction

Objectives

Upon completion of this lesson, you should be able to:

- Explain the difference between feature selection and dimension reduction in the context of regression.

- Describe the procedure of principal component regression.

- Perform principal component regression using statistical software, including using cross validation to select the number of principal components

- Describe the procedure of partial least squares.

- Perform partial least squares (PLS) using statistical software, including using cross validation to select an appropriate number of PLS directions.

- Compare the performance of PCR and PLS to other regression models using cross validation techniques.

Lesson 7: Nonlinear Regression

Objectives

Upon completion of this lesson, you should be able to:

- Explain limitations of linear regression.

- Compare and contrast regression splines, smoothing splines, and natural spline regression.

- Use statistical software to build a generalized additive model for predicting the response based on multiple predictors.

- Compare the performance of the nonlinear methods to each other and other regression models using cross validation techniques.

Lesson 8: Linear Classification

Objectives

Upon completion of this lesson, you should be able to:

- Describe how logistic regression and linear discriminant analysis fits into the larger framework of statistical learning.

- Describe the different forms (logit, odds, and probability) of a logistic regression model.

- Fit a logistic regression model using statistical software.

- Interpret the output of a logistic regression model, including coefficient and probability estimates.

- Perform linear discriminant analysis using statistical software.

- Use tools, such as a receiver operating characteristic curve and/or confusion matrix, to assess the performance of a linear classification model.

- Compare the performance of linear classification models using cross validation techniques.

Lesson 9: Nonlinear Classification

Objectives

Upon completion of this lesson, you should be able to:

- Describe how k-nearest neighbors classification and classification trees fit into the larger framework of statistical learning.

- Explain the k-nearest neighbors procedure for classification.

- Perform k-nearest neighbors classification using statistical software, including using cross validation to select the number of neighbors.

- Build a classification tree using statistical software, visualize the results, and explain the variable importance values.

- Explain how we guard against overfitting in the context of classification trees.

- Compare the performance of the nonlinear methods to other classification models using cross validation techniques.

Lesson 10: Ensemble Learning

Objectives

Upon completion of this lesson, you should be able to:

- Compare three classes of ensemble learning techniques: bagging, stacking, and boosting.

- Describe how random forest and fits into the larger framework of statistical learning.

- Describe how bagging decision trees is different than random forest.

- Explain a random forest algorithm.

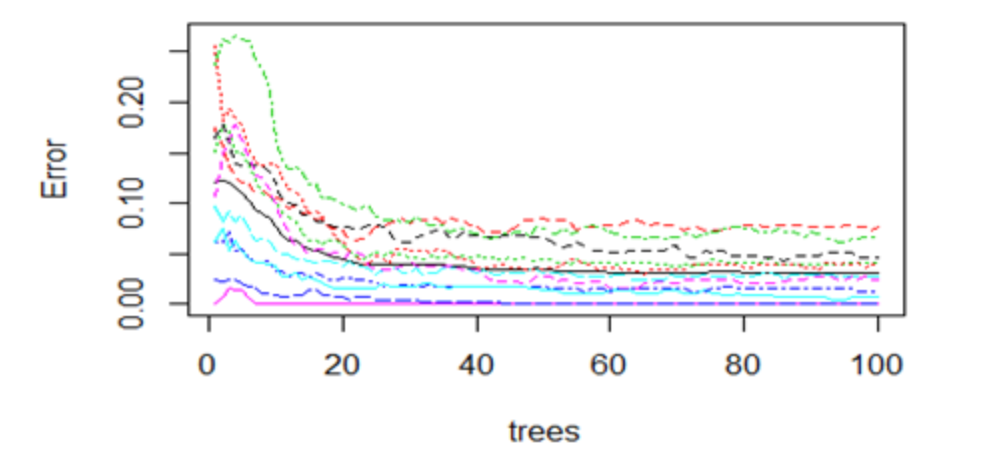

- Perform random forest regression and classification using statistical software.

- Evaluate the performance of random forest using out-of-bag observations and validation data and explain the variable importance values.

- Compare the performance of the ensemble learning methods to other models using cross validation techniques.

Lesson 11: Support Vector Machines

Objectives

Upon completion of this lesson, you should be able to:

- Describe how support vector machines fit into the larger framework of statistical learning.

- Define the maximal margin classifier in the context of classes that are separable by a linear boundary.

- Explain how support vector classifiers, or soft margin classifiers, differ from the maximal margin classifier.

- Explain how support vector machines differ from the support vector classifier.

- Fit a support vector machine classifier using statistical software.

- Compare the performance of a support vector machine to other classification models using cross validation techniques.

Lesson 12: Neural Networks

Objectives

Upon completion of this lesson, you should be able to:

- Describe how neural networks fit into the larger framework of statistical learning.

- Describe the architecture of single and multilevel neural networks.

- Explain the role of activation functions in neural networks and provide examples of commonly used activation functions.