In this section, we learn the following two measures for identifying influential data points:

- Difference in fits (DFFITS)

- Cook's distance

The basic idea behind each of these measures is the same, namely to delete the observations one at a time, each time refitting the regression model on the remaining n–1 observations. Then, we compare the results using all n observations to the results with the ith observation deleted to see how much influence the observation has on the analysis. Analyzed as such, we are able to assess the potential impact each data point has on the regression analysis.

Difference in Fits (DFFITS) Section

The difference in fits for observation i, denoted \(DFFITS_i\), is defined as:

\(DFFITS_i=\dfrac{\hat{y}_i-\hat{y}_{(i)}}{\sqrt{MSE_{(i)}h_{ii}}}\)

As you can see, the numerator measures the difference in the predicted responses obtained when the \(i^{th}\) data point is included and excluded from the analysis. The denominator is the estimated standard deviation of the difference in the predicted responses. Therefore, the difference in fits quantifies the number of standard deviations that the fitted value changes when the \(i^{th}\) data point is omitted.

An observation is deemed influential if the absolute value of its DFFITS value is greater than:

\(2\sqrt{\frac{p+1}{n-p-1}}\)

whereas always n = the number of observations and p = the number of parameters including the intercept. It is important to keep in mind that this is not a hard-and-fast rule, but rather a guideline only! It is not hard to find different authors using slightly different guidelines. Therefore, I often prefer a much more subjective guideline, such as a data point that is deemed influential if the absolute value of its DFFITS value sticks out like a sore thumb from the other DFFITS values. Of course, this is a qualitative judgment, perhaps as it should be, since outliers by their very nature are subjective quantities.

Example 11-2 Revisited

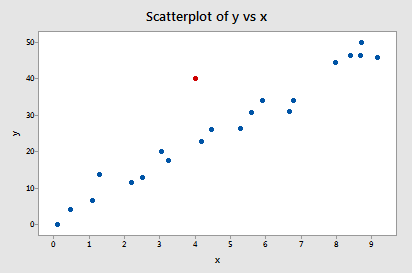

Let's check out the difference in fits measure for this Influence2 data set:

Regressing y on x and requesting the difference in fits, we obtain the following Minitab output:

| Row | x | y | DFIT |

|---|---|---|---|

| 1 | 0.10000 | -0.0716 | -0.378974 |

| 2 | 0.45401 | 4.1673 | -0.105007 |

| 3 | 1.09765 | 6.5703 | -0.162478 |

| 4 | 1.27936 | 13.8150 | 0.36737 |

| 5 | 2.20611 | 11.4501 | -0.17547 |

| 6 | 2.50064 | 12.9554 | -0.16377 |

| 7 | 3.04030 | 20.1575 | 0.10670 |

| 8 | 3.23583 | 17.5633 | -0.09265 |

| 9 | 4.45308 | 26.0317 | 0.03061 |

| 10 | 4.16990 | 22.7573 | -0.05849 |

| 11 | 5.28474 | 26.3030 | -0.16025 |

| 12 | 5.59238 | 30.6885 | -0.02183 |

| 13 | 5.92091 | 33.9402 | 0.05988 |

| 14 | 6.66066 | 30.9402 | -0.34035 |

| 15 | 6.79953 | 34.1100 | -0.18834 |

| 16 | 7.9943 | 44.4536 | 0.10017 |

| 17 | 8.41536 | 46.5022 | 0.09771 |

| 18 | 8.71607 | 50.0568 | 0.29276 |

| 19 | 8.70156 | 46.5475 | -0.02188 |

| 20 | 9.16463 | 45.7762 | -0.33970 |

| 21 | 4.00000 | 40.0000 | 1.55050 |

Using the objective guideline defined above, we deem a data point as being influential if the absolute value of its DFFITS value is greater than:

\(2\sqrt{\dfrac{p+1}{n-p-1}}=2\sqrt{\dfrac{2+1}{21-2-1}}=0.82\)

Only one data point — the red one — has a DFFITS value whose absolute value (1.55050) is greater than 0.82. Therefore, based on this guideline, we would consider the red data point influential.

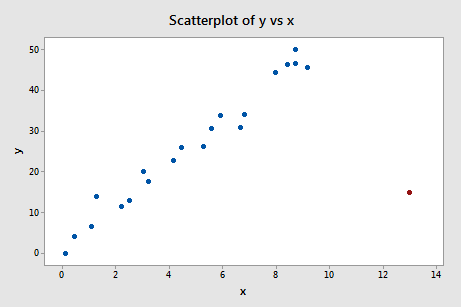

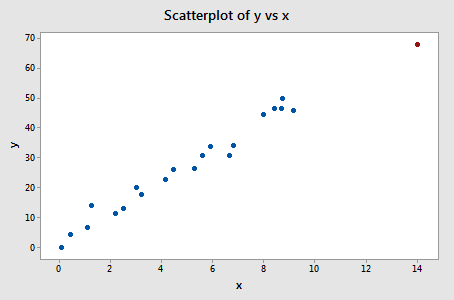

Example 11-3 Revisited

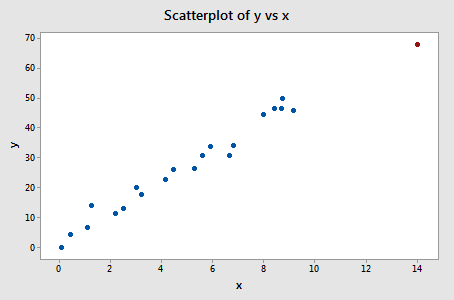

Let's check out the difference in fits measure for this Influence3 data set:

Regressing y on x and requesting the difference in fits, we obtain the following Minitab output:

| Row | x | y | DFIT |

|---|---|---|---|

| 1 | 0.10000 | -0.0716 | -0.52504 |

| 2 | 0.4540 | 4.1673 | -0.08388 |

| 3 | 1.0977 | 6.5703 | -0.18233 |

| 4 | 1.2794 | 13.8150 | 0.75898 |

| 5 | 2.2061 | 11.4501 | -0.21823 |

| 6 | 2.5006 | 12.9554 | -0.20155 |

| 7 | 3.0403 | 20.1575 | 0.27773 |

| 8 | 3.2358 | 17.5633 | -0.08229 |

| 9 | 4.4531 | 26.0317 | 0.13864 |

| 10 | 4.1699 | 22.7573 | -0.02221 |

| 11 | 5.2847 | 26.3030 | -0.18487 |

| 12 | 5.5924 | 30.6885 | 0.05524 |

| 13 | 5.9209 | 33.9402 | 0.19741 |

| 14 | 6.6607 | 30.9228 | -0.42448 |

| 15 | 6.7995 | 34.1100 | -0.17249 |

| 16 | 7.9794 | 44.4536 | 0.29917 |

| 17 | 8.4154 | 46.5022 | 0.30961 |

| 18 | 8.7161 | 50.5068 | 0.63049 |

| 19 | 8.7016 | 46.5475 | 0.14947 |

| 20 | 9.1646 | 45.7762 | -0.25095 |

| 21 | 14.0000 | 68.0000 | -1.23842 |

Using the objective guideline defined above, we deem a data point as being influential if the absolute value of its DFFITS value is greater than:

\(2\sqrt{\frac{p+1}{n-p-1}}=2\sqrt{\frac{2+1}{21-2-1}}=0.82\)

Only one data point — the red one — has a DFFITS value whose absolute value (1.23841) is greater than 0.82. Therefore, based on this guideline, we would consider the red data point influential.

When we studied this data set at the beginning of this lesson, we decided that the red data point did not affect the regression analysis all that much. Yet, here, the difference in fits measure suggests that it is indeed influential. What is going on here? It all comes down to recognizing that all of the measures in this lesson are just tools that flag potentially influential data points for the data analyst. In the end, the analyst should analyze the data set twice — once with and once without the flagged data points. If the data points significantly alter the outcome of the regression analysis, then the researcher should report the results of both analyses.

Incidentally, in this example here, if we use the more subjective guideline of whether the absolute value of the DFFITS value sticks out like a sore thumb, we are likely not to deem the red data point as being influential. After all, the next largest DFFITS value (in absolute value) is 0.75898. This DFFITS value is not all that different from the DFFITS value of our "influential" data point.

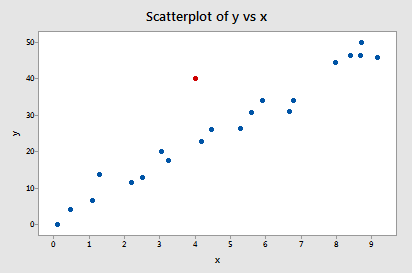

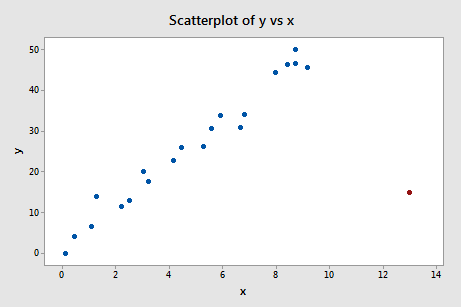

Example 11-4 Revisited

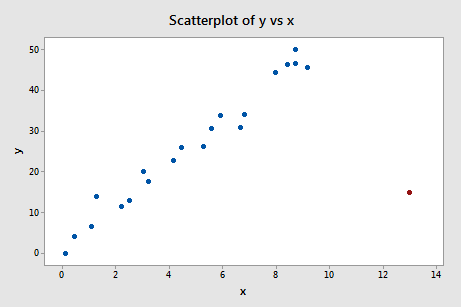

Let's check out the difference in fits measure for this Influence4 data set:

Regressing y on x and requesting the difference in fits, we obtain the following Minitab output:

| Row | x | y | DFIT |

|---|---|---|---|

| 1 | 0.10000 | -0.0716 | -0.4028 |

| 2 | 0.45401 | 4.1673 | -0.2438 |

| 3 | 1.0977 | 6.5703 | -0.2058 |

| 4 | 1.2794 | 13.8150 | 0.0376 |

| 5 | 2.2061 | 11.4501 | -0.1314 |

| 6 | 2.5006 | 12.9554 | -0.1096 |

| 7 | 3.0403 | 20.1575 | 0.0405 |

| 8 | 3.2358 | 17.5633 | -0.0424 |

| 9 | 4.4531 | 26.0317 | 0.0602 |

| 10 | 4.1699 | 22.7573 | 0.0092 |

| 11 | 5.2847 | 26.3030 | 0.0054 |

| 12 | 5.5924 | 30.6885 | 0.0782 |

| 13 | 5.9209 | 33.9402 | 0.1278 |

| 14 | 6.6607 | 30.9402 | 0.0072 |

| 15 | 6.7995 | 34.1100 | 0.0731 |

| 16 | 7.9794 | 44.4536 | 0.2805 |

| 17 | 8.4154 | 46.5022 | 0.3236 |

| 18 | 8.7161 | 50.0568 | 0.4361 |

| 19 | 8.7016 | 46.5475 | 0.3089 |

| 20 | 9.1646 | 45.7762 | 0.2492 |

| 21 | 13.0000 | 15.0000 | -11.4670 |

Using the objective guideline defined above, we again deem a data point as being influential if the absolute value of its DFFITS value is greater than:

\(2\sqrt{\frac{p+1}{n-p-1}}=2\sqrt{\frac{2+1}{21-2-1}}=0.82\)

What do you think? Do any of the DFFITS values stick out like a sore thumb? Errr — the DFFITS value of the red data point (–11.4670) is certainly of a different magnitude than all of the others. In this case, there should be little doubt that the red data point is influential!

Cook's distance measure Section

Just jumping right in here, Cook's distance measure, denoted \(D_{i}\), is defined as:

\(D_i=\dfrac{(y_i-\hat{y}_i)^2}{p \times MSE}\left( \dfrac{h_{ii}}{(1-h_{ii})^2}\right)\)

It looks a little messy, but the main thing to recognize is that Cook's \(D_{i}\) depends on both the residual, \(e_{i}\) (in the first term), and the leverage, \(h_{ii}\) (in the second term). That is, both the x value and the y value of the data point play a role in the calculation of Cook's distance.

In short:

- \(D_{i}\) directly summarizes how much all of the fitted values change when the \(i^{th}\) observation is deleted.

- A data point having a large \(D_{i}\) indicates that the data point strongly influences the fitted values.

Let's investigate what exactly that first statement means in the context of some of our examples.

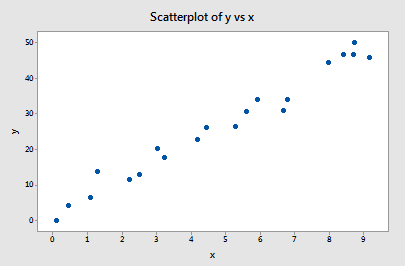

Example 11-1 Revisited

You may recall that the plot of the Influence1 data set suggests that there are no outliers nor influential data points for this example:

If we regress y on x using all n = 20 data points, we determine that the estimated intercept coefficient \(b_0 = 1.732\) and the estimated slope coefficient \(b_1 = 5.117\). If we remove the first data point from the data set, and regress y on x using the remaining n = 19 data points, we determine that the estimated intercept coefficient \(b_0 = 1.732\) and the estimated slope coefficient \(b_1 = 5.1169\). As we would hope and expect, the estimates don't change all that much when removing the one data point. Continuing this process of removing each data point one at a time, and plotting the resulting estimated slopes (\(b_1\)) versus estimated intercepts (\(b_0\)), we obtain:

The solid black point represents the estimated coefficients based on all n = 20 data points. The open circles represent each of the estimated coefficients obtained when deleting each data point one at a time. As you can see, the estimated coefficients are all bunched together regardless of which, if any, data point is removed. This suggests that no data point unduly influences the estimated regression function or, in turn, the fitted values. In this case, we would expect all of the Cook's distance measures, \(D_{i}\), to be small.

Example 11-4 Revisited

You may recall that the plot of the Influence4 data set suggests that one data point is influential and an outlier for this example:

If we regress y on x using all n = 21 data points, we determine that the estimated intercept coefficient \(b_0 = 8.51\) and the estimated slope coefficient \(b_1 = 3.32\). If we remove the red data point from the data set, and regress y on x using the remaining n = 20 data points, we determine that the estimated intercept coefficient \(b_0 = 1.732\) and the estimated slope coefficient \(b_1 = 5.1169\). Wow—the estimates change substantially upon removing the one data point. Continuing this process of removing each data point one at a time, and plotting the resulting estimated slopes (\(b_1\)) versus estimated intercepts (\(b_0\)), we obtain:

Again, the solid black point represents the estimated coefficients based on all n = 21 data points. The open circles represent each of the estimated coefficients obtained when deleting each data point one at a time. As you can see, with the exception of the red data point (x = 13, y = 15), the estimated coefficients are all bunched together regardless of which, if any, data point is removed. This suggests that the red data point is the only data point that unduly influences the estimated regression function and, in turn, the fitted values. In this case, we would expect the Cook's distance measure, \(D_{i}\), for the red data point to be large and the Cook's distance measures, \(D_{i}\), for the remaining data points to be small.

Using Cook's distance measures

The beauty of the above examples is the ability to see what is going on with simple plots. Unfortunately, we can't rely on simple plots in the case of multiple regression. Instead, we must rely on guidelines for deciding when a Cook's distance measure is large enough to warrant treating a data point as influential.

Here are the guidelines commonly used:

- If \(D_{i}\) is greater than 0.5, then the \(i^{th}\) data point is worthy of further investigation as it may be influential.

- If \(D_{i}\) is greater than 1, then the \(i^{th}\) data point is quite likely to be influential.

- Or, if \(D_{i}\) sticks out like a sore thumb from the other \(D_{i}\) values, it is almost certainly influential.

Example 11-2 Revisited

Let's check out the Cook's distance measure for this data set (Influence2 dataset):

Regressing y on x and requesting the Cook's distance measures, we obtain the following Minitab output:

| Row | x | y | COOK1 |

|---|---|---|---|

| 1 | 0.10000 | -0.0716 | 0.073076 |

| 2 | 0.45401 | 4.1673 | 0.005800 |

| 3 | 1.09765 | 6.5703 | 0.013794 |

| 4 | 1.27936 | 13.8150 | 0.067493 |

| 5 | 2.20611 | 11.4501 | 0.015960 |

| 6 | 2.50064 | 12.9554 | 0.013909 |

| 7 | 3.04030 | 20.1575 | 0.005954 |

| 8 | 3.23583 | 17.5633 | 0.004498 |

| 9 | 4.45308 | 26.0317 | 0.000494 |

| 10 | 4.16990 | 22.7573 | 0.001799 |

| 11 | 5.28474 | 26.3030 | 0.013191 |

| 12 | 5.59238 | 30.6885 | 0.000251 |

| 13 | 5.92091 | 33.9402 | 0.001886 |

| 14 | 6.66066 | 30.9228 | 0.056275 |

| 15 | 6.79953 | 34.1100 | 0.018262 |

| 16 | 7.97943 | 44.4536 | 0.005272 |

| 17 | 8.41536 | 46.5022 | 0.005021 |

| 18 | 8.71607 | 50.0568 | 0.043960 |

| 19 | 8.70156 | 46.5475 | 0.000253 |

| 20 | 9.16463 | 45.7762 | 0.058968 |

| 21 | 4.00000 | 40.0000 | 0.363914 |

The Cook's distance measure for the red data point (0.363914) stands out a bit compared to the other Cook's distance measures. Still, the Cook's distance measure for the red data point is less than 0.5. Therefore, based on the Cook's distance measure, we would not classify the red data point as being influential.

Example 11-3 Revisited

Let's check out the Cook's distance measure for this Influence3 data set :

Regressing y on x and requesting the Cook's distance measures, we obtain the following Minitab output:

| Row | x | y | COOK1 |

|---|---|---|---|

| 1 | 0.10000 | -0.0716 | 0.134157 |

| 2 | 0.45401 | 4.1673 | 0.003705 |

| 3 | 1.09765 | 6.5703 | 0.017302 |

| 4 | 1.27936 | 13.8150 | 0.241690 |

| 5 | 2.20611 | 11.4501 | 0.024433 |

| 6 | 2.50064 | 12.9554 | 0.020879 |

| 7 | 3.04030 | 20.1575 | 0.038412 |

| 8 | 3.23583 | 17.5633 | 0.003555 |

| 9 | 4.45308 | 26.0317 | 0.009943 |

| 10 | 4.16990 | 22.7573 | 0.000260 |

| 11 | 5.28474 | 26.3030 | 0.017379 |

| 12 | 5.59238 | 30.6885 | 0.001605 |

| 13 | 5.92091 | 33.9402 | 0.019748 |

| 14 | 6.66066 | 30.9228 | 0.081344 |

| 15 | 6.79953 | 34.1100 | 0.015289 |

| 16 | 7.97943 | 44.4536 | 0.044620 |

| 17 | 8.41536 | 46.5022 | 0.047961 |

| 18 | 8.71607 | 50.0568 | 0.173901 |

| 19 | 8.70156 | 46.5475 | 0.011656 |

| 20 | 9.16463 | 45.7762 | 0.032322 |

| 21 | 14.0000 | 68.0000 | 0.701965 |

The Cook's distance measure for the red data point (0.701965) stands out a bit compared to the other Cook's distance measures. Still, the Cook's distance measure for the red data point is gretaer than 0.5 but less than 1. Therefore, based on the Cook's distance measure, we would perhaps investigate further but not necessarily classify the red data point as being influential.

Example 11-4 Revisited

Let's check out the Cook's distance measure for this Influence4 data set:

Regressing y on x and requesting the Cook's distance measures, we obtain the following Minitab output:

| Row | x | y | COOK1 |

|---|---|---|---|

| 1 | 0.10000 | -0.0716 | 0.08172 |

| 2 | 0.45401 | 4.1673 | 0.03076 |

| 3 | 1.09765 | 6.5703 | 0.02198 |

| 4 | 1.27936 | 13.8150 | 0.00075 |

| 5 | 2.20611 | 11.4501 | 0.00901 |

| 6 | 2.50064 | 12.9554 | 0.00629 |

| 7 | 3.04030 | 20.1575 | 0.00086 |

| 8 | 3.23583 | 17.5633 | 0.00095 |

| 9 | 4.45308 | 26.0317 | 0.00191 |

| 10 | 4.16990 | 22.7573 | 0.00004 |

| 11 | 5.28474 | 26.3030 | 0.00002 |

| 12 | 5.59238 | 30.6885 | 0.00320 |

| 13 | 5.92091 | 33.9402 | 0.00848 |

| 14 | 6.66066 | 30.9228 | 0.00003 |

| 15 | 6.79953 | 34.1100 | 0.00280 |

| 16 | 7.97943 | 44.4536 | 0.03958 |

| 17 | 8.41536 | 46.5022 | 0.05229 |

| 18 | 8.71607 | 50.0568 | 0.09180 |

| 19 | 8.70156 | 46.5475 | 0.04809 |

| 20 | 9.16463 | 45.7762 | 0.03194 |

| 21 | 13.0000 | 15.0000 | 4.04801 |

In this case, the Cook's distance measure for the red data point (4.04801) stands out substantially compared to the other Cook's distance measures. Furthermore, the Cook's distance measure for the red data point is greater than 1. Therefore, based on the Cook's distance measure—and not surprisingly—we would classify the red data point as being influential.

An alternative method for interpreting Cook's distance that is sometimes used is to relate the measure to the F(p, n–p) distribution and to find the corresponding percentile value. If this percentile is less than about 10 or 20 percent, then the case has little apparent influence on the fitted values. On the other hand, if it is near 50 percent or even higher, then the case has a major influence. (Anything "in between" is more ambiguous.)