The first simple linear regression model condition concerns linearity: the mean of the response at each predictor value should be a linear function of the predictor. The neat thing about simple linear regression — in which there is a response y and just one predictor x — is that we can get a good feel for this condition just by looking at a simple scatter plot (so, in this case, we don't even need to look at a residual plot). Let's start by looking at three different examples.

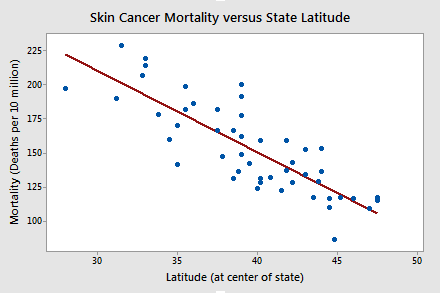

Skin Cancer and Mortality

Do the data suggest that a linear function is adequate in describing the relationship between skin cancer mortality and latitude (Skin Cancer dataset)?

The answer is yes! It appears as if the relationship between latitude and skin cancer mortality is indeed linear, and therefore it would be best if we summarized the trend in the data using a linear function.

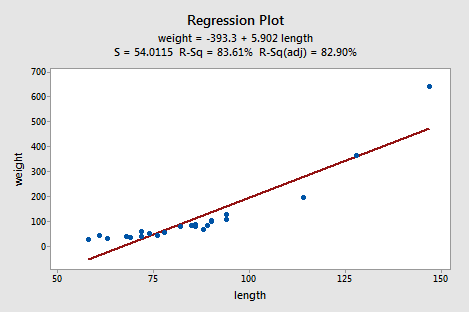

Alligators

The length of an alligator can be estimated fairly accurately from aerial photographs or from a boat. Estimating the weight of the alligator, however, is a much greater challenge. One approach is to use a regression model that summarizes the trend between the length and weight of alligators. The length of an alligator obtained from an aerial photograph or boat can then be used to predict the weight of the alligator. In taking this approach, some wildlife biologists captured a random sample of n = 25 alligators. They measured the length (x, in inches) and weight (y, in pounds) of each alligator. (Alligator dataset)

Do the resulting data suggest that a linear function is adequate in describing the relationship between the length and weight of an alligator?

The answer is no! Don't you think a curved function would more adequately describe the trend? The scatter plot gives us a pretty good indication that a linear model is inadequate in this case.

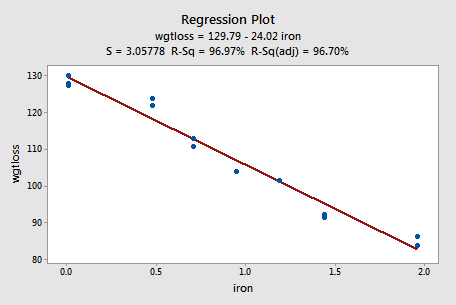

Alloy Corrosion

Thirteen (n = 13) alloy specimens comprised of 90% copper and 10% nickel — each with a specific iron content — were tested for corrosion. Each specimen was rotated in salty seawater at 30 feet per second for 60 days. The corrosion was measured in weight loss in milligrams/square decimeters/day. The researchers were interested in studying the relationship between iron content (x) and weight loss due to corrosion (y). (Corrosion dataset)

Do the resulting data that appear in the following plot suggest that a linear function is adequate in describing the relationship between iron content and weight loss due to corrosion?

The answer is yes! As in the first example, our visual inspection of the data suggests that a linear model would be adequate in describing the trend between iron content and weight loss due to corrosion.

Try It! Visual inspection of plots Section

-

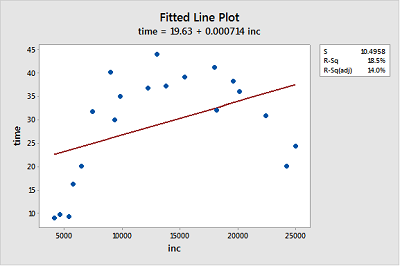

Income and time to the first child. The Income and Birth dataset contains the husband's annual incomes (inc, in dollars) and time (time, in months) between marriage and first child for n = 20 couples. (As you can tell by the incomes, the data set is rather old!)

- Create a fitted line plot treating time as the response and inc as the predictor. (See Minitab Help: Creating a fitted line plot).

-

Looking at the plot, is a linear function adequate in describing the relationship between inc and time? Explain your answer.

No, the data displays a curvilinear relationship between Y = time and X = inc.

-

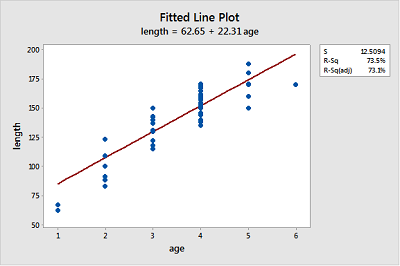

Bluegill fish. The Blue Gills dataset contains the lengths (in mm) and ages (in years) of n = 78 bluegill fish.

- Create a fitted line plot treating length as the response and age as the predictor.

-

Looking at the plot, is a linear function adequate in describing the relationship between age and length? Explain your answer.

Probably not, because the growth pattern seems steeper than the fitted line for ages 1-4 and then length seems to level out for ages 5-6.

-

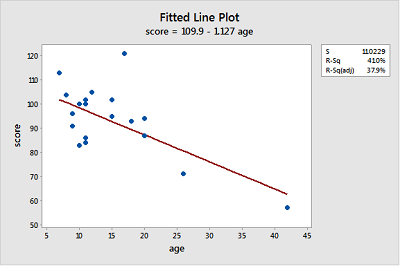

Gesell adaptive scores. The Adaptive dataset contains the Gesell adaptive scores and ages (in months) of n = 21 children with cyanotic heart disease.

- Create a fitted line plot treating score as the response and age as the predictor.

-

Looking at the plot, is a linear function adequate in describing the relationship between age and score? Explain your answer.

The linear function describes the relationship reasonably well for most of the data points but seems strongly influenced by the point for age = 42 at the far right and the point with score = 120 at the top does not seems to fit the overall trend very well.