Example 5-1 Section

Let's jump in and take a look at some "real-life" examples in which a multiple linear regression model is used. Make sure you notice, in each case, that the model has more than one predictor. You might also try to pay attention to the similarities and differences among the examples and their resulting models. Most of all, don't worry about mastering all of the details now. In the upcoming lessons, we will revisit similar examples in greater detail. For now, my hope is that these examples leave you with an appreciation of the richness of multiple regression.

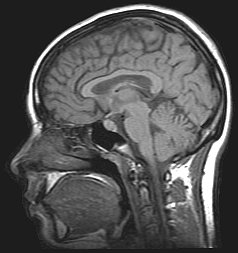

Are a person's brain size and body size predictive of his or her intelligence?

Interested in answering the above research question, some researchers (Willerman, et al, 1991) collected the following data (IQ Size data) on a sample of n = 38 college students:

- Response (y): Performance IQ scores (PIQ) from the revised Wechsler Adult Intelligence Scale. This variable served as the investigator's measure of the individual's intelligence.

- Potential predictor (\(x_{1}\)): Brain size based on the count obtained from MRI scans (given as count/10,000).

- Potential predictor (\(x_{2}\)): Height in inches.

- Potential predictor (\(x_{3}\)): Weight in pounds.

As always, the first thing we should want to do when presented with a set of data is to plot it. And, of course, plotting the data is a little more challenging in the multiple regression setting, as there is one scatter plot for each pair of variables. Not only do we have to consider the relationship between the response and each of the predictors, but we also have to consider how the predictors are related to each other.

A common way of investigating the relationships among all of the variables is by way of a "scatter plot matrix." Basically, a scatter plot matrix contains a scatter plot of each pair of variables arranged in an orderly array. Here's what one version of a scatter plot matrix looks like for our brain and body size example:

For each scatterplot in the matrix, the variable on the y-axis appears at the left end of the plot's row and the variable on the x-axis appears at the bottom of the plot's column. Try to identify the variables on the y-axis and x-axis in each of the six scatter plots appearing in the matrix. You can check your understanding by selecting the icons appearing in the above matrix.

Incidentally, in case you are wondering, the tick marks on each of the axes are located at 25%, and 75% of the data range from the minimum. That is:

- the first tick = ((maximum - minimum) * 0.25) + minimum

- the second tick = ((maximum - minimum) * 0.75) + minimum

Now, what does a scatterplot matrix tell us? Of course, one use of the plots is simple data checking. Are there any egregiously erroneous data errors? The scatter plots also illustrate the "marginal relationships" between each pair of variables without regard to the other variables. For example, it appears that brain size is the best single predictor of PIQ, but none of the relationships are particularly strong. In multiple linear regression, the challenge is to see how the response y relates to all three predictors simultaneously.

We always start a regression analysis by formulating a model for our data. One possible multiple linear regression model with three quantitative predictors for our brain and body size example is:

\(y_i=(\beta_0+\beta_1x_{i1}+\beta_2x_{i2}+\beta_3x_{i3})+\epsilon_i\)

where:

- \(y_{i}\) is the intelligence (PIQ) of student i

- \(x_{i1}\) is the brain size (MRI) of student i

- \(x_{i2}\) is the height (Height) of student i

- \(x_{i3}\) is the weight (Weight) of student i

and the independent error terms \(\epsilon_{i}\) follow a normal distribution with mean 0 and equal variance \(\sigma_{2}\).

A couple of things to note about this model:

- Because we have more than one predictor (x) variable, we use slightly modified notation. The x-variables (e.g., \(x_{i1}\), \(x_{i2}\), and \(x_{i3}\)) are now subscripted with a 1, 2, and 3 as a way of keeping track of the three different quantitative variables. We also subscript the slope parameters with the corresponding numbers (e.g., \(\beta_{1}\) \(\beta_{2}\) and \(\beta_{3}\)).

- The "LINE" conditions must still hold for the multiple linear regression model. The linear part comes from the formulated regression function — it is, what we say, "linear in the parameters." This simply means that each beta coefficient multiplies a predictor variable or a transformation of one or more predictor variables. We'll see in Lesson 9 that this means that, for example, the model, \(y=\beta_0+\beta_1x+\beta_2x^2+\epsilon\), is a multiple linear regression model even though it represents a curved relationship between \(y\) and \(x\).

Of course, our interest in performing a regression analysis is almost always to answer some sort of research question. Can you think of some research questions that the researchers might want to answer here? How about the following set of questions? What procedure would you use to answer each research question? (Do the procedures that appear in parentheses seem reasonable?)

- Which, if any, predictors — brain size, height, or weight — explain some of the variations in intelligence scores? (Conduct hypothesis tests for individually testing whether each slope parameter could be 0.)

- What is the effect of brain size on PIQ, after taking into account height and weight? (Calculate and interpret a confidence interval for the brain size slope parameter.)

- What is the PIQ of an individual with given brain size, height, and weight? (Calculate and interpret a prediction interval for the response.)

Let's take a look at the output we obtain when we ask Minitab to estimate the multiple regression model we formulated above:

Regression Analysis: PIQ versus Brain, Height, Weight

Analysis of Variance

| Source | DF | Adj SS | Adj MS | F-Value | P-value |

|---|---|---|---|---|---|

| Regression | 3 | 5572.7 | 1857.58 | 4.74 | 0.007 |

| Brain | 1 | 5239.2 | 5239.23 | 13.37 | 0.001 |

| Height | 1 | 1934.7 | 1934.71 | 4.94 | 0.033 |

| Weight | 1 | 0.0 | 0.0 | 0.00 | 0.998 |

| Error | 34 | 13321.8 | 391.82 | ||

| Total | 37 | 188946 |

Model Summary

| S | R-sq | R-sq(adj) | R-sq(pred) |

|---|---|---|---|

| 19.7944 | 29.49% | 23.27% | 12.76% |

Coefficients

| Term | Coef | SE Coef | T-Value | P-Value | VIF |

|---|---|---|---|---|---|

| Constant | 11.4 | 63.0 | 1.77 | 0.086 | |

| Brain | 2.060 | 0.563 | 3.66 | 0.001 | 1.58 |

| Height | -2.73 | 1.23 | -2.22 | 0.033 | 2.28 |

| Weight | 0.001 | 0.197 | 0.00 | 0.998 | 2.02 |

Regression Equation

PIQ = 111.4 + 2.060 Brain - 2.73 Height + 0.001 Weight

My hope is that you immediately observe that much of the output looks the same as before! The only substantial differences are:

- More predictors appear in the estimated regression equation and therefore also in the column labeled "Term" in the coefficients table.

- There is an additional row for each predictor term in the Analysis of Variance Table. By default in Minitab, these represent the reductions in the error sum of squares for each term relative to a model that contains all of the remaining terms (so-called Adjusted or Type III sums of squares). It is possible to change this using the Minitab Regression Options to instead use Sequential or Type I sums of squares, which represent the reductions in error sum of squares when a term is added to a model that contains only the terms before it.

We'll learn more about these differences later, but let's focus now on what you already know. The output tells us that:

- The \(R^{2}\) value is 29.49%. This tells us that 29.49% of the variation in intelligence, as quantified by PIQ, is reduced by taking into account brain size, height, and weight.

- The Adjusted \(R^{2}\) value — denoted "R-sq(adj)" — is 23.27%. When considering different multiple linear regression models for PIQ, we could use this value to help compare the models.

- The P-values for the t-tests appearing in the coefficients table suggest that the slope parameters for Brain (P = 0.001) and Height (P = 0.033) are significantly different from 0, while the slope parameter for Weight (P = 0.998) is not.

- The P-value for the analysis of variance F-test (P = 0.007) suggests that the model containing Brain, Height, and Weight is more useful in predicting intelligence than not taking into account the three predictors. (Note that this does not tell us that the model with the three predictors is the best model!)

So, we already have a pretty good start on this multiple linear regression stuff. Let's take a look at another example.